How to dramatically reduce your IHC staining times with the Leica Biosystems BOND-III

NEW STUDY SHOWS BOND-III UP TO 40% FASTER THAN BENCHMARK ULTRA

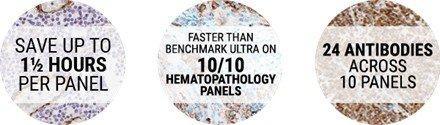

In a recent study, the Leica Biosystems BOND-III was found to complete cases up to 100 minutes faster than the Roche Tissue Diagnostics BenchMark ULTRA. The study examines the panel turnaround time to assess the impact that fully automated IHC and ISH slide stainers such as the BOND-III and BenchMark ULTRA can have delivered the full panel of slides to a pathologist. The scope of the testing was across 10 frequently run hematopathology IHC panels.

Webinar Transcription

DELIVER THE LAST SLIDE WITH THE FIRST

Case reporting timeliness is one metric of laboratory efficiency and performance, with immunohistochemistry recognized as a process that can impact this metric. This study shows that the BOND-III instrument processed common hematopathology panels up to 40% faster than the BenchMark ULTRA instrument which would allow a pathologist to review cases in a timely manner and quickly order additional tests when required. In addition, allowing more slides and panels to be stained within a shift, thereby reducing either panel waiting time or staff overtime, or both.

Hello everyone and welcome to today's webinar, A Comparative Study of Turnaround Time for Common Hematopathology Panels on the Leica Biosystems BOND-III and the Roche Tissue Diagnostics BenchMark ULTRA Instruments. I now present today's speakers, David Roche, BOND Product Manager at Leica Biosystems, and Melissa Boyd, Global Support Applications Team Lead at Leica Biosystems. David and Melissa, you may now begin your presentation.

Okay, thanks, Alexis. We'll start off with the presentation. As Alexis said, welcome to today's webinar. We'll be looking at the results of a study we did earlier this year on the comparative turnaround times of the BOND-III and the BenchMark ULTRA. My name is David Roche. I'll take you through the first bit and then hand it over to Melissa to talk about workflow a bit later, and then we'll get into the nuts and bolts of the study.

Contents

Here are the contents. They'll closely follow the contents of the study that we conducted earlier this year. But as I said, before we get into the nuts and bolts of the study, it is worthwhile spending a few minutes looking at some of the complexities around the anatomic pathology workflow in general and IHC workflow in particular. And as said, please put in as many questions as you can and we'll have some time hopefully at the end for either myself or Melissa to answer those questions.

Introduction: Single Piece Flow in IHC

Over the last 10 to 15 years, there has been an increasing focus on healthcare costs across the globe, as exemplified by things such as the Affordable Care Act. Our anatomic pathology laboratories are not immune from this, often having to perform a delicate balancing act of budget constraints and just sheer volume of work sometimes without compromising on quality.

And because of these external drivers, some pathology associations have attempted to provide guidance on key performance metrics to improve patient care. One of these metrics is the timeliness of the patient reporting as a measure of the lab performance and efficiency. Our industry has adopted certain elements of the Toyota Production System methodology to improve quality, reduce the turnaround times for patients, and reduce costs.

The time it takes to complete the single piece, the patient case, has become an increasingly important unit of measurement. On the face of it, as you can see here, the workflow can seem deceptively simple.

- You get the request.

- You put the slides into a stainer.

- You stain the slides.

- You collate the slides.

- Then, send them back to the pathologist.

Problem is, this superficial workflow simplicity is rarely the reality. In anatomic pathology, a further complication of this single piece flow concept is the reality that a patient case is commonly ordered as a series of slides, and the pathologist ordinarily requires the full set of slides per patient before moving to make a report.

The number of slides in a patient case is most extreme in the immunohistochemistry workflow. Case requests in IHC are ordered as a series of tests with multiple antibodies and slides, often referred to as a panel. Providing pathologists with all their requested panel at once allows them to conduct a report in one sitting. Pathologists typically wait for all the slides in the panel before reviewing and reporting.

Here we can see a stylized example of the full workflow of a histology sample from the receipt of the sample at the front end, all the way through to the pathologist's report. There are multiple steps to simply get to the pathologist an H&E, or a hematoxylin and eosin slide, or a series of H&Es. There are significant manual and automated elements within the process and the opportunity for variations in the way each lab tackles each step along the way.

In addition, immunohistochemistry is often an add-on test after examination of the routine stains, increasing the total turnaround time of reporting and often pushing turnaround times past some of the industry metrics. It's a complex workflow and many moving parts. And each one of these steps is a touch point, a person, a cost, an opportunity for error, and a waiting point if staff aren't available.

What is vitally important to note here, when it comes to completing a panel to be reviewed by a pathologist, in terms of measuring turnaround times, it is the last slide of the panel that matters. Here I'm going to hand over to Melissa to talk about some of the different ways that we can do workflow within an anatomic pathology before we get into the study. Over to you, Melissa.

Workflow Comparisons: Batch

Okay, thanks for that, David. Before we go into the study detail, I think it's just worth taking a bit of time to have a general look at how some labs get through that IHC workload. The two main ones are batch and continuous. Here on this slide, we have an illustrated form of batch workflow. This has got quite clear cutoff times for new work; for run times and completion times as well.

Ordinarily, the cut-off time for each batch to start running would be well communicated across the lab to the pathologist and the rest of the staff. As you would expect, the times for the completed task would be well known to everyone.

There are both advantages and limitations to this general workflow. Generally, its value in terms of predictability is sort of counterbalanced with its relative inflexibility in terms of workflow functions.

Workflow Comparisons: Continuous

We're going to talk about our continuous workflow. On this next graph, we've got illustrated a form of continuous workflow. In its purest form, each panel of requests would be dealt with as soon as it arrives, and the lab would be able to undertake all of the prep steps. almost immediately to get the test running.

Just like batch workflow, there are both advantages and limitations to this form of workflow. To be truly continuous, the anatomical lab would probably need to be running 24 hours. No matter when the request came in, they could deal with them immediately. They would avoid sort of a large buildup of slides that need to be batched. There are some 24-hour labs out there, and thankfully I'm not in one. The complexity of the anatomical pathology workflow doesn't lend itself easily to continuous workflow.

As we saw earlier there's so many steps prior to the staining of a slide or a panel of slides and there still needs to be a pathologist on hand once they've completed or we've completed all the slides in order to complete the full workflow.

A study by the American College of Pathologists indicated that 80% of labs had in place a midday cut-off for requests to be completed the same day. This is an indication of just how prevalent at least some forms of batch processing still is in the anatomical pathology world.

Counterbalance this with a separate study that suggests 94% of respondents prefer to complete the IHC staining on the same day of the request. You can start to see some of the challenges labs face in meeting these twin demands of having the same day testing and workload management, especially for any requests that arrive later than midday.

Workflow Comparisons: Per Antibody or Per Panel

Now we can have a look at our workflow comparisons per antibody or per panel. A lab could make a determination on how they group the individual tests within a case by either keeping the cases together or splitting the cases up into their requisite tests. Once again you see both have advantages and limitations of either method.

Complex Inputs and Causes

Let's have a look at the complexity on our next slide here. As you can see here, we have got a lot of inputs and causes. When we take all of this into consideration, the how and the when the requests arrive, the complexity of the requests, what the staffing and shift patterns look like throughout the day, how skilled the staff are, instrumentation being used, all those sort of things, we can start to see that there are so many inputs that a lab needs to consider before implementing a workflow solution that fits their situation. Once we think about all the inputs, the batch and continuous processing, now that we've talked about that, let's pass over to David and he can continue.

Navigating Automation

Thanks, Melissa. We'll have a quick look at trying to navigate automation before we get into the study. You can see that there's a lot of different solutions out there. There's a whole bunch of features to choose from before you even get to the decision-making process of what's going to fit your lab.

There's things like targeting efficiency, slide turnaround time, and throughput, but the impact of these combined features on case and panel turnaround times is quite difficult to assess on paper. The relative value each of you will place on a feature or a set of features will differ from lab to lab, and even from person to person, depending on your circumstances that you bring to the decision-making process.

The purpose of this study was to examine the relative panel turnaround times of the BOND-III and the BenchMark ULTRA fully automated IHC instruments. Since the panel turnaround time is one way that we can try to measure lab efficiency.

The Study: Abstract

Here we have the full abstract of the study so that you can familiarize yourself with its focus and scope. I'll just reiterate, this study compares the turnaround times of 10 common hematopathology panels for two leading IHC slide staining systems, the BOND-III, and the Roche Tissue Diagnostics BenchMark ULTRA.

I would like to make it clear at this point that the sole focus of this study was to compare turnaround times. As you'll see and hear later in the presentation, no actual tissue was stained, and so we draw no conclusions, as far as this study is concerned, on the relative quality of the stained slide that would be produced from either instrument.

The Study: Batch, Case-Based Workflow

For this study, we chose to focus on a lab workflow that is essentially of a batching case-based design. This is a reasonably common workflow for IHC labs, and it does allow for an element of predictability in terms of cut-off times, inventory control, and staff resourcing.

In this scenario, it doesn't particularly matter how and when the requests are received, either in big batches or continuous smaller requests, as the workflow paradigm dictates how and when the instrument will be run. Importantly, this workflow method does allow for an element of walk-away time for the staff. The lab staff can be freed up for other important tasks within the lab. The workflow graphic on this page is not a precise representation of the study method, it's merely to illustrate more broadly what a batching workflow may look like in a lab with two to three runs per day, as well as an overnight run. This is a quite common way of determining how to do your work.

Whilst there are most certainly other basic workflow methodologies, and each lab will often have its own unique twist on the fundamental design, this design was chosen for this study for a number of reasons. It allowed us to make a design or a study design that was relevant to many laboratories. It made it quite easy to set up and describe. It's reproducible. You could set up this study for yourselves if you wanted to verify the results. It reduced variables such as needing to swap out reagents and/or antibodies mid-run. It avoided having to use the ultimate reagent access software feature on the BenchMark ULTRA, which may have extended the panel turnaround times. And it enabled an efficient use of reagent inventory and avoided requiring greater than one RTU container or dispenser per antibody to be inactive use. That is quite common within labs, always only having one antibody open.

The Study: Antibody and Panel Selection

The overall approach allowed us to undertake a like-for-like analysis of turnaround times for each individual panel. This study, as mentioned earlier, comprises 10 panels, which are representative of common hematopathology disease states, and these were selected based on worldwide incident rates of hematopathology. The panel of antibodies chosen for each of the ten disease states were taken principally from recommendations published by the National Comprehensive Cancer Network, or the NCCN.

The NCCN is a not-for-profit alliance of 27 leading cancer organizations and is globally recognized for its leadership in creating best practice clinical guidelines and resources for patients, clinicians, and other health care decision makers. In this study, the panels are referred to as Hema panels 1 through to 10. And whilst the panels do represent common test requests by pathologists to diagnose real disease states, we can't name them. Basically because we manufacture many of the antibodies used and we need to avoid implying any diagnostic utility that would extend beyond the stated instructions for use. That's why we refer to these as panels and don't specifically name them.

Each panel was made-up of between 6 to 11 antibodies each. And across the board, we'll be utilizing 24 different antibodies within the 10 panels. The total was 88 slides. 23 out of the 24 antibodies were taken from the NCCN guidelines. The 24th antibody was recommended for inclusion into Heme panel 5 by Lu et al.

The composition of each panel, as you can see in the table shown here, were chosen for three main reasons. If you view both the Leica Biosystems and the Roche Tissue Diagnostics websites, both vendors arrange their antibodies in terms of pathology menu, and both have a heme panel. Heme panels exhibit quite a significant variation in terms of panel size. As a relevant study of single-piece flow, the range of sizes within the heme panels can really push the boundaries of ensuring the single piece flow methodology is effective. And lastly, it just seemed as good a place as any to start. Given that heme panels form a common cohort of requests from general hospitals to academic centers to specialist reference centers, why not start here?

For this study, the slides were processed in three separate runs to reflect the common lab workflow known as batching. The slides for a new batch only start after all slides from the initial batch have finished. Each instrument ran identical batches.

The Study: RTU Antibodies and Protocols

Each required marker, a ready-to-use or RTU antibody tied to the staining instrument, along with the factory recommended protocols, were used wherever possible. Although the protocols were selected by RTU recommendations for both the BOND and the BenchMark instruments, diluents, or water, was used as a substitute for the actual reagent.

For the BOND-III, 20 of the 24 antibodies were available in the BOND RTU format. As you can see in the table, four antibodies were not available in the RTU format. Ordinarily, in these instances, if a lab offers that test, they will source the antibody either as a concentrate and then they optimize it themselves, or they would send them out. More likely, they would optimize them as a concentrate.

For these four antibodies, including the pre-treatment and the incubation times; these were taken from an external clinical laboratory where they were in regular use.

For the BenchMark ULTRA, 22 of the 24 antibodies were available in RTU format. And for one of them, CD8, we skipped out one step, the Ultra Block step, simply because we didn't have room on the reagent carousel for the reagent. This meant going against the factory recommended protocol for CD8. Since this study wasn't focused on quality, but workflow, we felt this was a reasonable change to make. In any case, it did make the protocol for CD8 slightly shorter, so it had no impact on the result.

For the other two antibodies not available in the RTU format, we used the shortest protocol in the panel that they were part of to ensure that they didn't impact the overall turnaround time either. The BenchMark system ordinarily or primarily offers two different detection systems, the OptiView and the UltraView. For each antibody, the detection system listed in their IFU was chosen. Wherever the IFU listed both, the OptiView detection system was selected as this represents their newest detection technology available for the BenchMark instruments.

The instruments were pre-loaded with all the reagents and slides to allow all slides to be started together, as would be the case in a batch workflow. The BOND-III tests were performed here in Melbourne by Leica Biosystems. The BenchMark ULTRA tests were performed by an experienced third-party testing organization, and both instruments were operated in accordance with their respective operator instructions, and the instrument software versions are recorded in the white paper.

Turnaround Time Calculation – Slide vs Case

The turnaround time calculation, slide versus case. The turnaround time calculations, you can see here. In simple terms, we timed the runs from the time the panel was started on the instrument to when the panel was finished on the instruments. For the individual slide turnaround times, the same thing, but measured at the slide level, not at the complete panel level.

We didn't include any of the pre-processing time, such as the time it takes to enter all the slide details, slide labeling, placing the slides on instruments, reagent setup, et cetera. Nor do we include any of the post-processing time, removal of the liquid cover slips, cover slipping, et cetera. The main reason for this is these tasks were considered too open to interpretation and variability amongst individual operators in terms of ensuring the study was fair and unbiased. It's not to say they're not important, but for us it was outside the scope of the study.

The Study Results – Panel TAT

Let's look at the results. The first result we can see here is the panel turnaround time; the time it takes to complete all the slides within each heme panel. Have a look at the spread here. The panel turnaround times on the BOND-III were consistent, ranging from only 149 minutes to 156 minutes, so a seven-minute spread. Whereas the panel turnaround times on the BenchMark ULTRA varied more broadly, with the range spreading from 189 minutes up to 250 minutes, or over four hours.

If you take these as an average, the respective mean panel turnaround time for the BOND-III was 2 hours 33 minutes, and for the BenchMark ULTRA, 3 hours 59 minutes. The BOND-III was on average 26 minutes faster than the BenchMark ULTRA, or 36% faster. Three of the 10 panels were more than 100 minutes faster on the BOND-III compared to the BenchMark ULTRA, and eight of the 10 panels were between 93 and 101 minutes faster.

Heme panel five had the smallest difference in mean panel turnaround time, with the BOND-III being 33 minutes faster.

Results – Individual Slide TAT

Now we'll look at the results from the individual turnaround time or individual slide turnaround time perspective. The average slide turnaround time for the markers on the BOND-III was 146 minutes, and the average turnaround time for the markers on the BenchMark ULTRA was 164 minutes, a difference of only 18 minutes. However, the variability was much higher on the BenchMark ULTRA. Across all markers, turnaround times on the BenchMark ULTRA showed 148 minutes spread, or 2 hours 28 minutes spread.

The BOND-III kept a narrow distribution of spread of only 17 minutes. This wide distribution of individual antibody completion times on the BenchMark ULTRA, with 65% of the antibodies tested taking longer than the longest BOND-III antibody. This is the reason why the BenchMark ULTRA panel took, on average, over an hour and a half longer to complete than the BOND-III. So just as a reminder, it's the slide with the slowest turnaround time that determines when the panel is completed, and then when the whole thing can be sent to the pathologist for review.

Results – Slide TAT Impact on Panel TAT

If we dig a little deeper into the link between individual antibody turnaround times and panel turnaround times, it's worth noting that eight out of the 10 panels specified CD10 as one of the markers. CD10 had the longest turnaround time on the BenchMark ULTRA, at a little over four hours. There's no single feature, I would say, that makes the BOND-III generally faster than the BenchMark ULTRA. But if you look at the length of the antigen retrieval steps, it's a reasonably good predictor in terms of turnaround time.

The one-and-a-half-hour step for the CD10 on the BenchMark ULTRA doesn't compare particularly favorably in terms of turnaround time with the 20-minute retrieval step on the BOND-III when both protocols are run to the manufacturer's guidelines. Panel 4, which you can see here, didn't contain CD10 and was still 49 minutes slower overall, with six out of the seven antibodies having a longer turnaround time on the BenchMark ULTRA than the BOND-III.

Discussion – Daily Workload

Another way we can measure efficiency is the time elapsed, or the time it took to process all 88 slides on the instruments. The BOND-III was able to process the three batches totaling the 88 slides around 7.6 hours, while the BenchMark ULTRA completed the same three batches in 12 and a half hours. This data would indicate that you could get an additional run of up to four of these kinds of panels, essentially another full batch, on the BOND-III in the same time it took for the BenchMark ULTRA to run the 88 slides. To put it another way, a 36% efficiency advantage for the BOND-III instrument.

If you look at it from the viewpoint of a complete shift, when we consider things like overtime, the BOND-III would allow all 10 heme panels tested in this study to be completed within an eight-hour period, whereas the BenchMark ULTRA would only complete seven out of the 10. This would represent a 30% increase in the number of cases ready for diagnosis within a standard eight-hour shift.

Whilst it is true, there are several ways to set up an IHC workflow within the lab. There may be sound reasons why a lab needs to adopt a batch workflow method, such as inventory management and management of staff resources. This study showed that under the test conditions described here, the BOND-III can complete the full panels up to 40% faster than the BenchMark ULTRA, offering a predictability in panel completion times at or around two and a half hours. That would allow the lab to manage their resources, and most importantly, play their part in rapid case reporting, and permitting clinicians to quickly provide patients with some certainty.

Summary

Case reporting timeliness is the one metric of lab efficiency and performance, with IHC, or immunohistochemistry, recognized as a process that really can impact this metric. This study shows that the BOND-III instrument processed common heme panels up to 40% faster than the BenchMark ULTRA, which would allow pathologists to review cases in a timely manner and quickly order additional tests if required.

In addition, allowing more slides and panels to be stained within a shift, thereby reducing either panel waiting time or staff overtime, or potentially both. A patient case, not an individual slide, is the true single piece in the process that defines efficiency and process quality. Therefore, the turnaround time of the last slide of a case is a critical measurement of efficiency.

In this study, the BOND-III delivered the last slide faster than the BenchMark ULTRA in all the panels tested.

Most importantly, I hope that this webinar gives you some food for thought in determining what it is that your lab truly needs to make a difference to team productivity, lab management, and ultimately, I guess, the accuracy and the timeliness of the diagnostic reports.

Feature lists rarely provide a real understanding of how a solution might be suitable, or how it may fit each unique situation especially in a complex environment like an anatomic pathology laboratory where there are still many manual and many automated components. I would very much urge you to do as much research as possible, and wherever possible, try before you buy. That concludes the webinar. We're ready now to take some questions. Alexis, if any have come through?

Questions & Answers

Yes, thank you, David and Melissa, for your informative presentations. We will now start the live Q&A portion of the webinar. If you have a question you'd like to ask, please do so now. Just click on the answer question box located on the far left of your screen, and we'll answer as many of your questions as we have time for. Let's get started.

What would happen to the BOND-III turnaround times if the IHC request from the pathologist didn't arrive in nice, neat batches that effectively allowed you to fill up each instrument?

That's a very-- that's an excellent question. One of the reasons a form of batching is still so prevalent in anatomic pathology is that the requests do arrive at non-predictable, non-uniform rates. It depends on what clinics are going on, traffic, there's a whole bunch of factors.

If I show you one of the previous slides, this workflow does try to deal with some of the lumpiness in the way the requests come in by having certain cutoff times. This allows the lab to optimize and to some extent to manage their workload. There are multiple ways to deal with each and every workflow, but we just wanted to make sure when we're doing this comparison that it was fair and unbiased. That's why we designed the study the way it is. By and large, the BOND-III had turnaround times of two and a half hours per antibody across the board, which is consistent considering there are over 20 different antibodies in the study.

It would be great to run this kind of study on your set of circumstances. And in fact, that's probably the only real way to know what solution is better for you. And if these findings would hold true for your set of circumstances. I'm confident they would, but the proof of the pudding will most certainly be in the eating. If you'd like to get in contact outside this meeting, I'd be happy to speak to you about how a similar study could be set up in your lab. We'd be more than happy to do that.

Have you tried to see how the results would change if you moved to a continuous workflow?

That's another excellent question. In short, no, but it would be interesting to see. One of the reasons we did set this study up the way that we have is it meant we took out a fair bit of the complexity when we think about each instrument, so we could make it fair and unbiased.

For example, loading all the reagents prior to us starting meant we didn't have to try and work with the BenchMark ULTRA's reagent access software. It was difficult to predict from run to run when it would give us access to the reagent carousesl. We didn't want to negatively influence their results by adding this variable into the mix. And just like the previous answer, I'd love to hear from any of you if you'd like to undertake this kind of study using your set of circumstances, and really kick the tires of whether these findings hold up for your set of circumstances.

I would add that we do hear from a lot of people that they are running a continuous workflow. But when you dig a little deeper, there's often a series of cutoff times. Just because we're continually all doing something involved in getting these requests to the pathologist doesn't necessarily mean we're running a truly continuous workflow. There's something about the complex nature of the anatomic pathology workflow that sets it apart from the other pathology disciplines. It's why I got into it in the first place and made it so rewarding. It's a wonderful mix of art and science. I hope that answers the question.

Why didn't you also assess the quality?

Yeah, that's another interesting question. I was kind of wondering when that one would come up. There's two main reasons. We used RTUs in the study wherever possible. This implies a certain level of quality of the stained slide anyway, from both us and from Roche. There are other studies out there, internal EQA schemes, that deal with the question of quality. We felt it would be more meaningful that we added to the topic of panel turnaround times to the wider debate instead of adding to the debate about quality.

I would say quality in many cases is in the eye of the beholder when it comes to IHC. We would be very, very happy to run these kinds of studies in your lab with your circumstances, with your slides and everything else to see one, if the quality matches your expectations and two, whether the turnaround time also hold up within your set of circumstances. That's why we didn't do a quality assessment as well.

When we go around various labs and speak to pathologists and lab managers, they always are 100% focused on quality. That is the number one metric that people are interested in. I would have to say, by and large, people are reasonably happy with whichever vendor they choose when it comes to quality, when the instruments work. What vexes them and what keeps them up at night is trying to work out how they're going to get through those slides whilst maintaining that quality. That's why we thought we would focus on workflow and turnaround time.

Thank you, David and Melissa. Do either of you have any final comments for our audience?

Just one final thought. It is difficult to look at a set of features for instrumentation, especially in anatomic pathology, where it's still a largely manual element to it. I really do very much urge you all to not just look at what we all say on paper, but try and understand how these pieces of instrument, or how these machines, how these tools will work for you in your set of circumstances, and most certainly try before you buy. Not every machine fits every situation.

Thank you again, David Roche and Melissa Boyd for your time today and your important research. Before we go, I'd like to thank the audience for joining us today and for their interesting questions. Until next time, goodbye.

Leica Biosystems content is subject to the Leica Biosystems website terms of use, available at: Legal Notice. The content, including webinars, training presentations and related materials is intended to provide general information regarding particular subjects of interest to health care professionals and is not intended to be, and should not be construed as, medical, regulatory or legal advice. The views and opinions expressed in any third-party content reflect the personal views and opinions of the speaker(s)/author(s) and do not necessarily represent or reflect the views or opinions of Leica Biosystems, its employees or agents. Any links contained in the content which provides access to third party resources or content is provided for convenience only.

For the use of any product, the applicable product documentation, including information guides, inserts and operation manuals should be consulted.

Copyright © 2026 Leica Biosystems division of Leica Microsystems, Inc. and its Leica Biosystems affiliates. All rights reserved. LEICA and the Leica Logo are registered trademarks of Leica Microsystems IR GmbH.